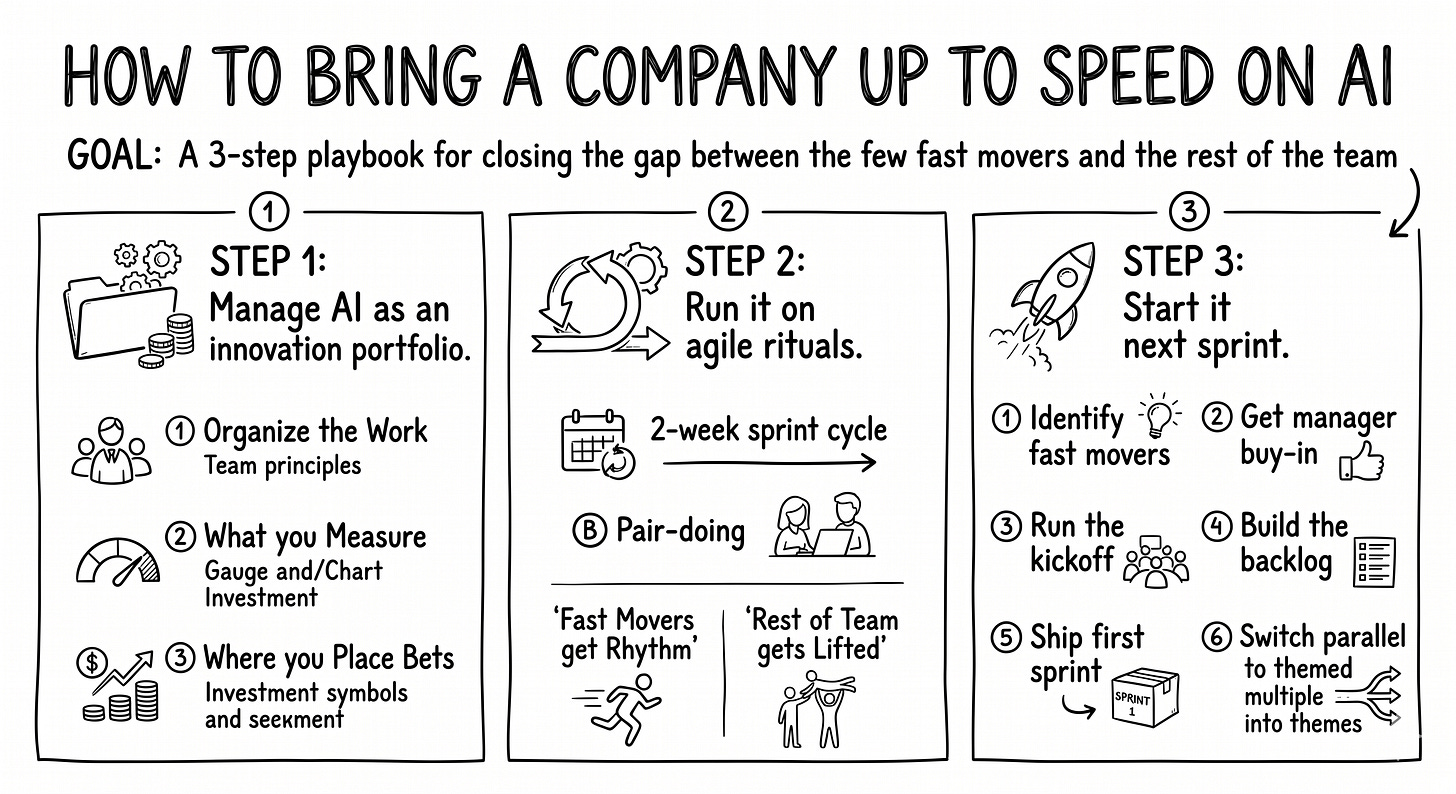

How to bring a company up to speed on AI

Identify the fast movers. Build a shared backlog. Run 2-week sprints with a single owner per topic. Pair-do with the rest of the team.

It’s Monday morning. You’re scanning your week. Leadership offsite, three product reviews, a check-in with each of your team leads. And the same pattern keeps showing up. In every team across the company, two or three people have AI prompts pinned to their second monitor and a half-finished agent in their personal repo. The rest haven’t really started. You’ve been telling yourself this gap is fine. Early days. Give it time. But it’s been months, and the gap keeps widening across every team you look at. The fast ones are getting faster. The rest aren’t moving.

You don’t have a process problem. You have a channeling problem. The energy is there, scattered across the company. It’s just spilling.

You may have read the headline. MIT’s 2025 NANDA report found that 95% of enterprise GenAI pilots ship no measurable P&L impact. The press read it as a scandal. It isn’t. Five percent succeeding is roughly what any innovation portfolio produces, in any domain, in any decade. That’s the math of betting on early ideas. The real question isn’t whether your pilots will fail. It’s whether they’ll fail in a way that teaches you anything.

After installing this in several companies, the same pattern shows up every time. Each team has its few fast movers and its many non-starters, and the gap compounds without coordination. Eighteen months into AI going mainstream, that gap has stopped closing on its own. The thing that actually works is the lightest possible governance: three innovation principles plus four agile rituals. Common sense. Borrowed from places you trust. Applied to AI.

If you’ve tried something like this and watched it fizzle (a guild, an AI team, an AI working group), stay with me. The networks I’ve watched fail didn’t fail because the framework was wrong. They failed because one of four conditions stopped holding underneath. We’ll work from the innovation principles to the rituals, and finish with the four conditions that keep the system alive. The result is a playbook you can start running next sprint without repeating the same fizzle.

Manage AI as an innovation portfolio: three principles to borrow before you build any rhythm.

Run it on agile rituals: a 2-week cycle, a pair-doing move, and four conditions that keep the system alive.

Start it next sprint, in six concrete moves.

The first principle is about organization. Two debates live there, and they have the same answer.

Manage AI as an innovation portfolio

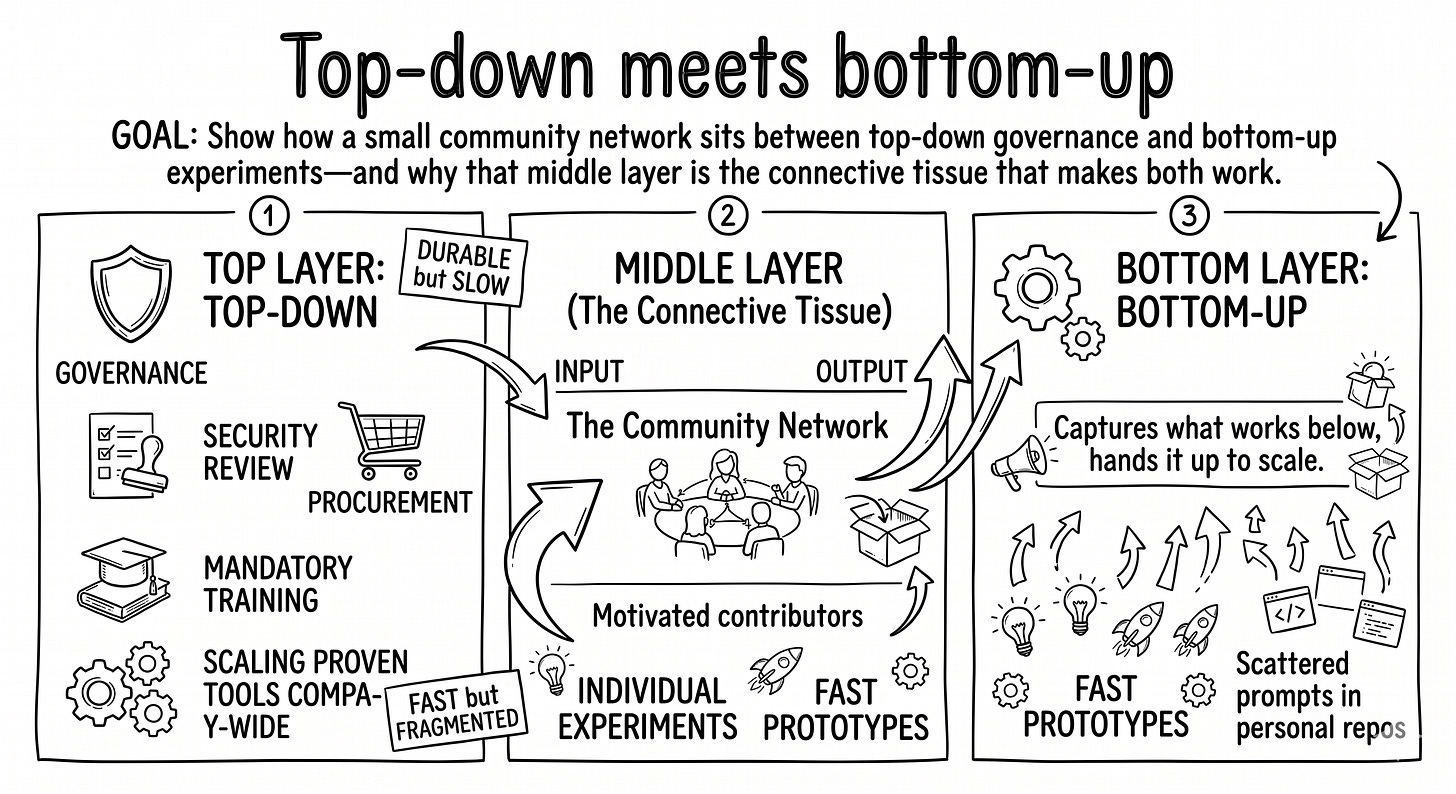

Combine top-down and bottom-up

Debate 1: top-down or bottom-up. Most leaders facing AI default to one or the other. Top-down: ”the platform team will pick the tools and roll them out.” Bottom-up: ”everyone will figure it out on their own.” Both are partial.

Top-down is the right move for mid-term consolidation and governance. Once a tool, prompt library, or skill has proven its value, you need top-down to scale it company-wide: security review, legal sign-off, procurement, mandatory training, integration with existing systems. That’s all real. The problem is when top-down is your only AI strategy. The AI landscape moves faster than any procurement cycle.

Bottom-up is the right move for short-term experimentation and speed. Motivated people moving fast on their own tasks is how you discover what actually works. Without it, you have a strategy with no signal. The problem is when bottom-up is your only strategy. Three teams build three different prompt libraries that don’t talk to each other. Six other teams don’t start at all because nobody told them where to begin. Within six months, the gap is structural, and the libraries don’t merge cleanly into anything reusable.

You need both. The challenge is connecting them. A small protected community in between: lets bottom-up energy run, captures what works, and hands it to top-down once it’s ready to scale. Etienne Wenger named this pattern in 1998. He called it a community of practice: a group that shares a concern, learns by doing, and decides what’s good enough to scale. AI is the latest domain it applies to.

Debate 2: dedicated team or embedded innovation. A close cousin of debate 1. Some companies create a dedicated innovation team. Others leave innovation in each existing team. Same answer: both, ideally hybrid. A dedicated AI core team owns the cross-cutting work (a shared prompt library, an internal Claude skill catalog, the shared backlog itself). Embedded contributors in each team own the process improvements specific to their domain. They know their work best. If you can only run one, run embedded. Their proximity to the actual work beats the dedicated team’s coordination advantage. Running both, knit through the same network, is what scales.

The community network is the operational form of both answers. Top-down meets bottom-up there. Dedicated meets embedded there. The network is where the connection happens.

Once the connective layer exists, the next question is how to track the work happening inside it. AI experiments aren’t projects.

Stop tracking AI work like projects

The second principle is about measurement: AI work is innovation, not a project. What you track has to change.

A normal project tracks planning, budget, and deliverables. An innovation effort tracks pivots, failures, and learnings. The two look similar from a distance. Up close, they’re opposites.

What makes AI work specifically weird: the technology shifts under your feet faster than your sprint cadence. The Mistral model that beat your benchmark in March will be replaced by July. The Claude skill that solved your QA problem in Q1 may be obsolete by Q3 when the next context-engineering pattern replaces it. Outputs go stale. Learnings don’t. That’s the shift you’re really making: from tracking what shipped to tracking what you understood.

Three concrete shifts in measurement:

Expect 80% of experiments to fail. Not “tolerate.” Expect. The 95% pilot-failure rate from the intro is what you get when nobody runs AI work as a portfolio: pilots commissioned, run, and forgotten. Inside a working network the rate drops, but it stays high on purpose. Eighty percent is the target, not the accident. If your hit rate is much higher, you’re picking targets that are too safe. If it’s much lower, you’re picking targets too speculative. The 80% is how you find the 20% that scales.

Measure learnings, not outputs. What did you learn from the failed experiments? Which assumption was wrong? What would you try differently next time? A failed experiment with a clear lesson is more valuable than a successful one with no insight.

Don’t celebrate failure for its own sake. Some companies overdo this: failure parties, “fail fast” t-shirts. That’s theater. A real failure is one where you learned something. A non-failure is one where you didn’t. Stay honest about which kind you’re shipping.

This shift in measurement is what most companies skip when they “do AI experiments.” They run experiments but track them on a project dashboard: completion percentage, on-time delivery, ROI estimates pulled out of thin air. Then they wonder why nothing scales. The reframe is the foundation. Without it, no ritual you bolt on top will hold.

The operational form of this principle (what counts as a successful learning, written down) is an artifact of the agile rhythm, not of the principle itself. Change what you measure before you formalize how you record it.

Once you measure learnings, the next question is where to place your bets.

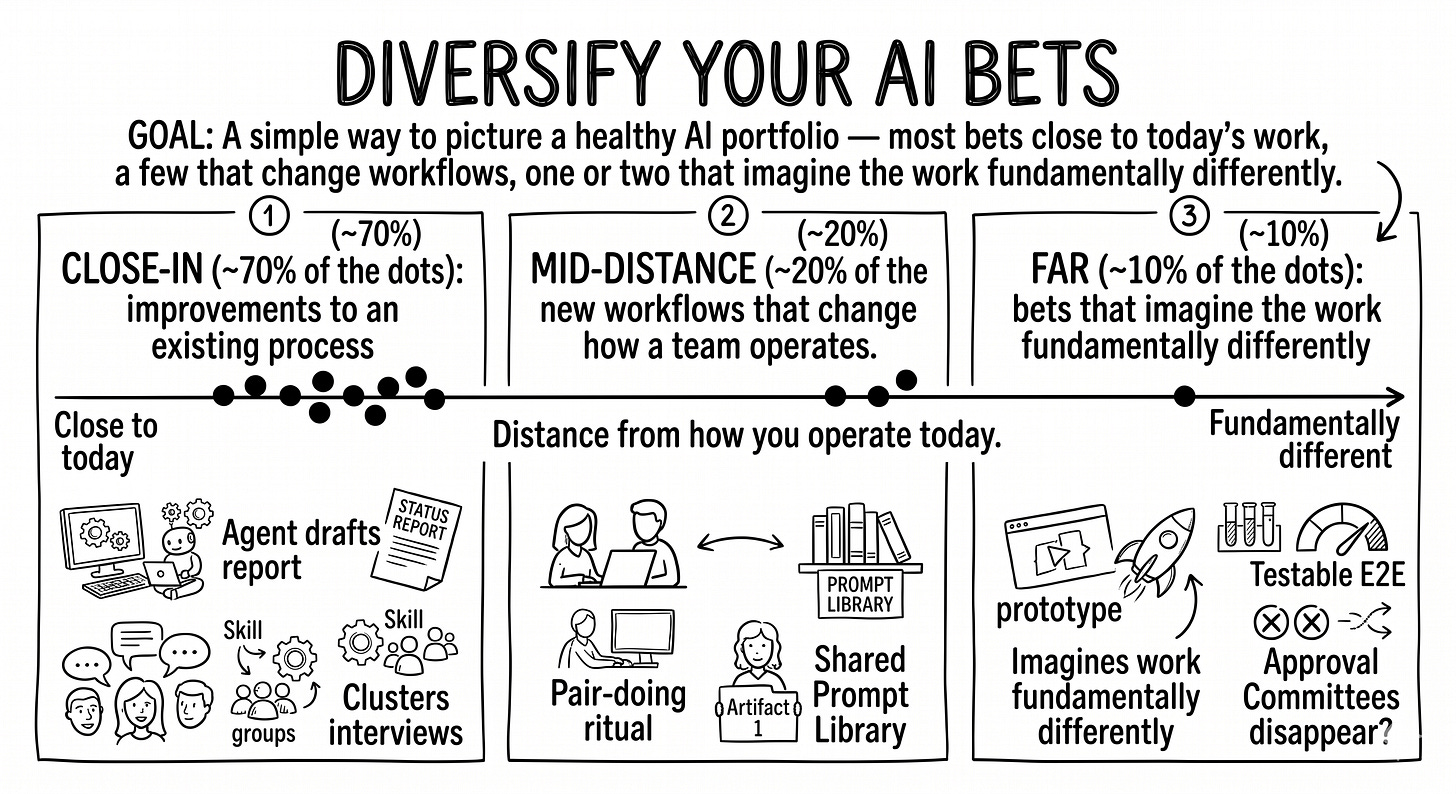

Diversify your AI bets

The third principle: don’t put all your AI eggs in one basket. Spread bets across distances from your current work.

A simple way to classify any AI experiment is by how far it sits from how you operate today.

Close-in bets. Improvements to an existing process. An agent that drafts your weekly status report. A Claude skill that turns customer interview transcripts into a clustered theme list. Low risk, fast feedback, modest payoff. You need these to build trust and momentum.

Mid-distance bets. New workflows that change how a team operates without rewriting the whole org chart. A pair-doing ritual that lets non-builders ship their first prompt. A shared prompt library that becomes the team’s first AI artifact.

Far bets. Bets that imagine the work fundamentally differently. What if half the approval committees disappeared because every prototype was already testable end-to-end? What if QA happened through monitoring instead of dedicated review? Most of these will not work. The few that do will reset what your company is capable of.

A backlog with only close-in bets produces incremental wins forever and never compounds. A backlog with only far bets produces theater and never ships anything usable. A healthy backlog has all three.

Bansi Nagji and Geoff Tuff named this exact pattern in Harvard Business Review in 2012. They call the three categories core, adjacent, and transformational, and they have a famous allocation rule: 70-20-10. Roughly seventy percent of the work on close-in bets, twenty percent on mid-distance, ten percent on far. It’s a default, not a law. But it’s a useful counterweight to the two failure modes above.

The cautionary anecdote: prompt engineering, then context engineering. When LLMs first hit the mainstream, the loudest trend was prompt engineering. Prompt libraries, prompt courses, prompt job titles. By mid-2025, Andrej Karpathy (post) and Simon Willison (post) were both publicly calling the shift. Context engineering, how the model gets fed the right retrieval, tools, memory, and state, is the load-bearing skill, and prompts are one ingredient inside it. Prompts didn’t die. They got demoted from the whole skill to one ingredient. A company that bet only on prompt infrastructure in 2023 didn’t waste the work. But two years later, they’re rebuilding most of the surrounding system to keep up. The companies that placed bets at varied distances (prompts and retrieval pipelines, prompts and agent tooling, prompts and internal context layers) compounded. The principle isn’t predict the next trend. It’s don’t bet only on this one.

Three principles tell you how to organize, what to measure, and where to bet. They need a rhythm. That’s what agile rituals give you.

Run it on agile rituals

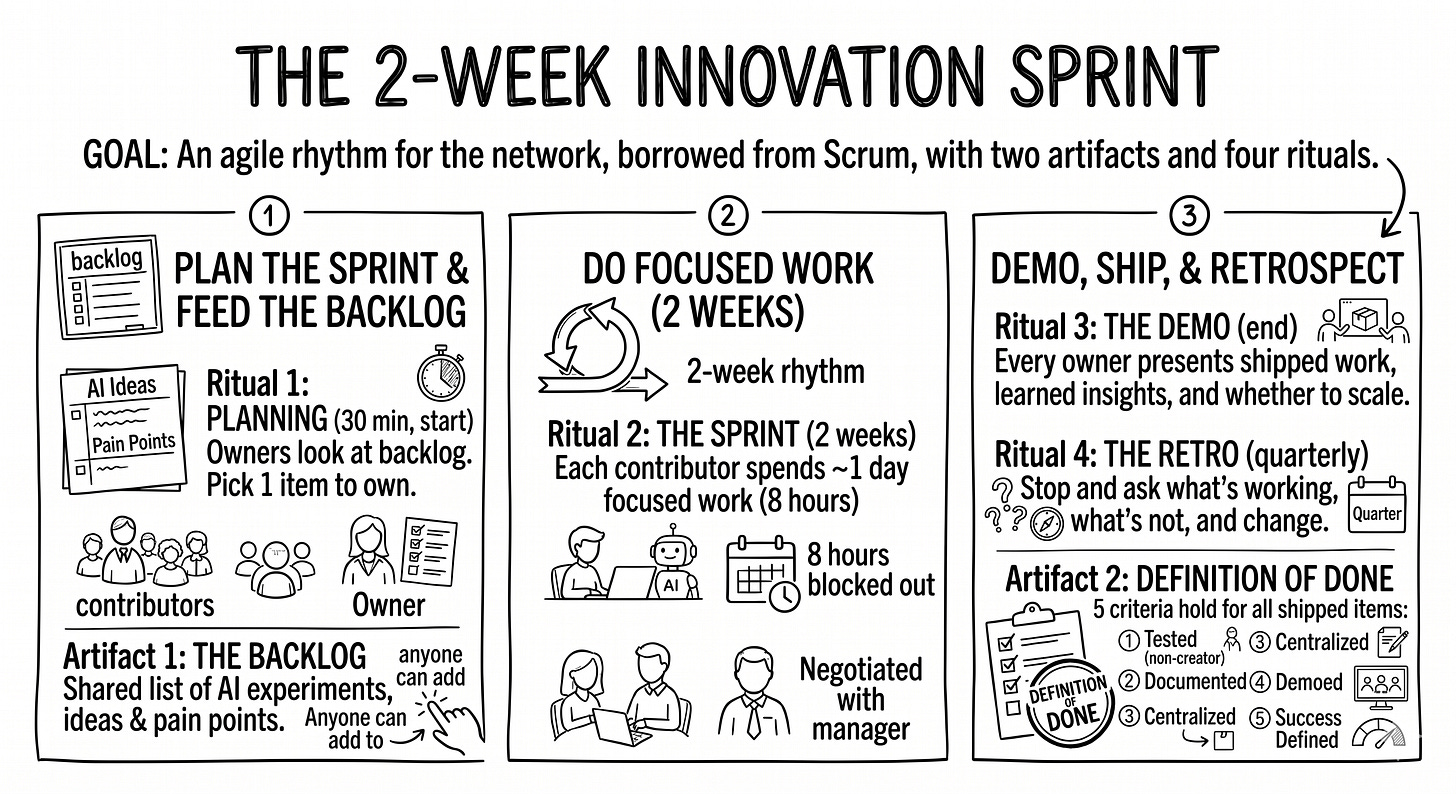

Borrow two artifacts and four rituals

Here’s where the answer surprises me as I write it: the cleanest framework is something you already know.

It’s the bones of agile. Stripped of the certifications, the ceremony, and the eight-page Confluence templates. Two artifacts (the things the work produces) and four rituals (when the work happens). That’s it.

Two artifacts:

The backlog. A single shared list of every AI experiment, idea, and pain point worth exploring. Anyone in the company can add to it. Some items are precise (”test Mistral Codestral on a real bug fix and report what it ships”); others are vague (”explore using LLMs for our weekly customer-research synthesis”). Both are fine. Vague items get sharpened by whoever picks them up. The backlog is a living queue, not a roadmap.

The Definition of Done. The same idea you’ve used in software work, applied here. Five criteria that have to hold for any experiment to count as shipped:

Tested by a non-creator. Someone who didn’t build the tool has used it on a real task and it worked.

Documented. Step-by-step instructions including required inputs and expected outputs.

Centralized. Published somewhere everyone can find it (a shared playbook, a wiki page, a folder).

Demoed. Presented at the next demo.

Success metric defined. A simple way to measure impact (time saved, frequency of use, quality lift) is named.

The “tested by a non-creator” criterion is the sharpest one. It’s dogfooding in its strict form: not “the team uses it,” but “someone other than the author can run it.” It forces the owner to make the work usable, not just buildable. A prompt that only the author can run is half the work. A prompt the author’s teammate can run on Monday is the whole work.

Four rituals:

The planning. Every two weeks, the contributors gather for 30 minutes. They look at the backlog, pick what each of them will own for the next sprint, and commit to a demo at the end. One owner per item, always. Others can help. The owner is accountable.

The sprint. Two weeks. Each contributor spends roughly one day of focused work on their item. That’s the contract: eight hours per sprint, negotiated with their manager up front. If they don’t have the capacity in a given sprint, they sit it out. No drama.

The demo. End of the sprint. Every owner presents what they shipped, what they learned, and whether it should scale to the rest of the company. This is the meeting that makes the network real. Skip it for two sprints in a row and the network dies.

The retro. Every few sprints (every quarter is a good rhythm), you stop and ask: what’s working, what’s not, what should we change? This is the meeting that lets the system itself adapt. Without it, the rituals drift into ceremony and the work gets stale.

Picture the cycle.

Why this lands. A two-week cadence is fast enough to keep up with how AI evolves. It’s slow enough to actually deliver. Eight hours per sprint is small enough that managers approve it without renegotiating org structure. It’s large enough to ship something demoable. The sprint length and the time commitment are both human-scale. That’s not a coincidence. It’s the same reason agile works for software in the first place.

The rituals give the fast movers a rhythm. They don’t, by themselves, lift the rest of the team. That takes one more move.

Lift the team with pair-doing

Here’s the question the rituals don’t answer: how does the 5% actually help the 95%?

If the answer is ”by demoing,” it doesn’t work. Watching a demo doesn’t move someone from “I haven’t started” to “I just shipped my first prompt.” It produces admiration, then forgetting. You need a different ritual for that, and it has a name: pair-doing.

The lineage is borrowed, not invented. Pair programming (Extreme Programming, late 1990s) and Woody Zuill’s mob programming (mid-2010s), applied outside engineering. Same mechanic, different domain.

Pair-doing is what it sounds like. One fast mover blocks 30 to 60 minutes on their calendar. Anyone on the team can sign up. The non-mover brings a real task from their week: not a hypothetical, not a tutorial. A specific email they have to write, a customer call they have to summarize, a feature spec they have to draft. They sit together (in person or on a video call), and the fast mover walks them through doing the task with AI. Live. Hands on the keyboard.

The non-mover leaves having actually completed a piece of their own work with AI. Not having watched someone else do it.

Why pair-doing works when workshops don’t:

It uses real work, not exercises. Exercises are forgotten by Friday. Real work is the thing they were going to do anyway.

It produces a finished output that day. The customer-call summary is done. They’re not building a portfolio of practice runs. They’re shipping their actual job.

It scales linearly with capacity. That’s a feature, not a bug. It keeps the network honest about its size. If you have two fast movers and they each run two pair-doing slots a week, that’s four people lifted per week. After a quarter, that’s roughly 50 people who’ve shipped their first AI-assisted piece of work. Workshop attendance doesn’t translate that way.

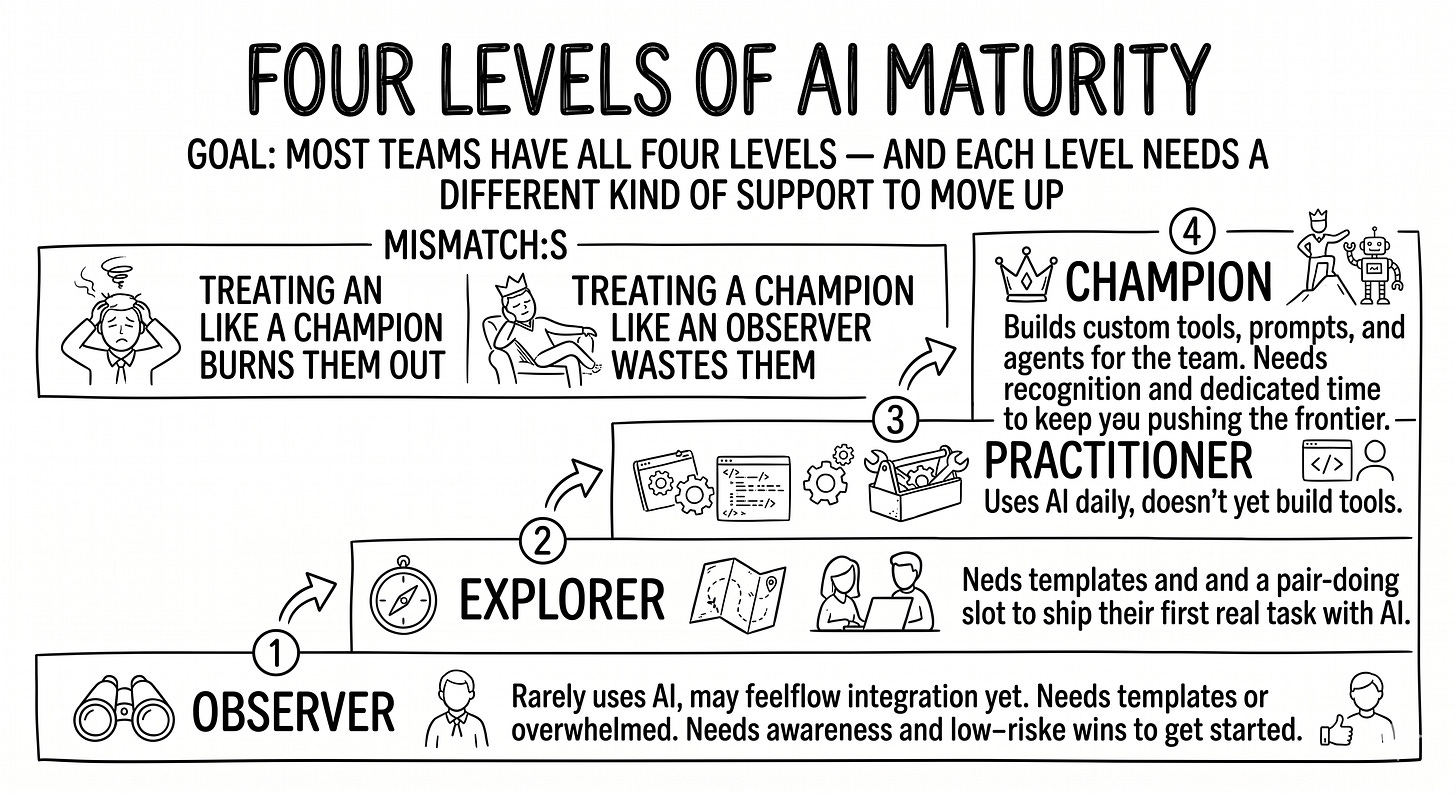

Different people need different support. Not everyone in the 95% is in the same place. A simple grid clarifies who needs what.

The grid matters because treating an Observer like a Champion burns them out, and treating a Champion like an Observer wastes them. Pair-doing is most powerful for moving Explorers to Practitioners. That’s where the gap is widest and the lift compounds fastest.

The rituals plus pair-doing are the engine. Four conditions keep the engine running past cycle three.

Set up the four conditions of success

These conditions aren’t extra rituals to bolt on. They’re the underlying things that have to hold for everything else to work. Set them up at kickoff. Check on them at every retro.

Lock in an active sponsor. A sponsor isn’t a manager who approves the network. A sponsor is a manager who protects time, attends demos, and amplifies wins. Picture the most senior person who’d be willing to walk into a Wednesday demo, ask a sharp question about what was shipped, and Slack a kudos to the contributor’s manager afterward. That’s the sponsor. Without one, contributors get pulled back into day-job delivery and the network dies in cycle three. If you can’t name your sponsor in one sentence and describe what they did at the last demo, you don’t have one.

Enforce the Definition of Done. Defining the DoD is easy. Enforcing it is the work. Hold every owner to all five criteria, especially ”tested by a non-creator.” If an owner shows up at a demo with a prompt that only they can run, the demo isn’t done. It’s a status update. Push back warmly: ”Great start. Who on your team can try it before next demo?” The DoD is what turns “I built something” into “the team can use it,” and it only happens if someone holds the line.

Switch from parallel to themed when the signal appears. Early on, let contributors pick whatever pain point lights them up. That’s how you harvest motivation and self-selection. Once the rhythm holds, switch modes: one theme per cycle, all experiments target it. Themed cycles produce something the rest of the company can adopt. Parallel sandboxes produce a scattered playbook nobody references. The trick is when. Too early and you kill motivation. Too late and the output stays shallow. Watch for the signal: more than half the demos start pulling from the same problem area without anyone asking. When that happens, the team is ready. In practice this lands somewhere between sprint 4 and sprint 6. The signal is the trigger, not the calendar.

Make pair-doing a habit, not a one-off. A single pair-doing session doesn’t shift behavior. A weekly slot, repeated for a quarter, does. Hold the calendar slot even when nobody signs up. The slot itself is the signal that this is how the team works now. After a few quiet weeks, somebody will book in. Usually the explorer who’s been watching from the sidelines and finally has a real task to bring.

The pattern across all four: easy to introduce, hard to sustain. Anyone can stand up a network in a kickoff meeting. The networks that last past cycle three keep these four conditions true after the launch energy fades.

Which makes the question concrete: how do you actually start?

Start it next sprint

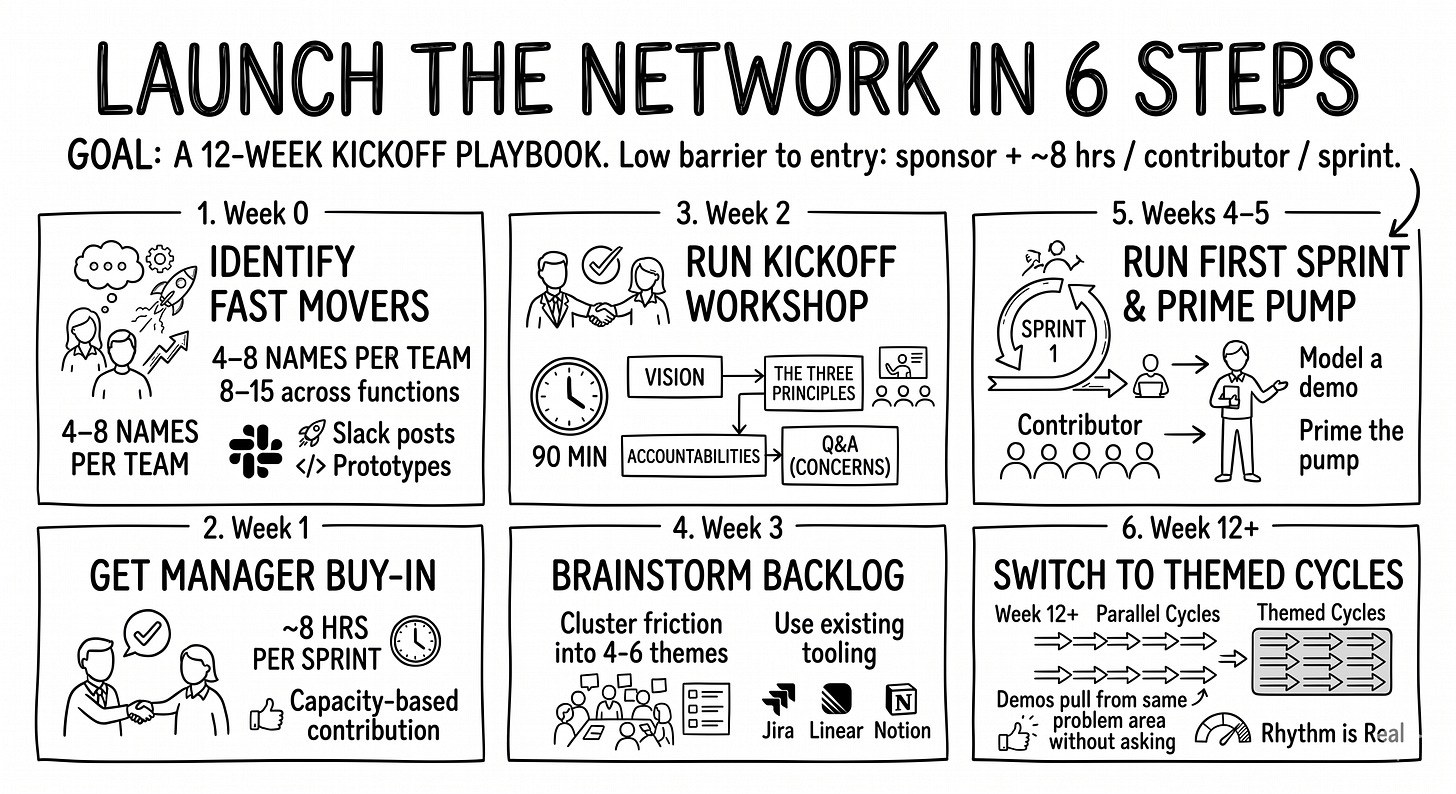

Six concrete moves. None require executive buy-in beyond a sponsor and roughly 8 hours per contributor per sprint.

Identify the fast movers. They’re easy to spot. They post AI things in Slack. They corner you after presentations to show you their latest prototype. They ask whether legal will finally approve a tool they’ve been quietly using. Make a list. Aim for 4 to 8 names at the team scale, 8 to 15 across functions at the company scale.

Get manager buy-in for the time. Walk to each name’s manager. Explain the network in two sentences (a small group of motivated people who’ll experiment, share, and lift the rest of the team) and the time commitment (one day per two weeks, if the contributor has the capacity that sprint). Most managers say yes once the time is bounded.

Run a kickoff workshop. 90 minutes. Four blocks: vision (where is the company on AI maturity, where does it want to be), the three innovation principles (so leadership doesn’t measure the network only on quick wins), accountabilities (what contributors are signing up for), and an open floor for concerns. The output is alignment, not a deliverable.

Brainstorm the first backlog. Within a week of the kickoff, run a 60-minute session where each contributor lists the friction points they hit in their work. Cluster into 4 to 6 candidate themes. Pick the first sprint’s items and put them in your existing tooling: Jira, Linear, Notion, whatever the company already uses. Do not introduce a new tool for this.

Run the first sprint, and prime the pump if you have to. The first demo is the most fragile moment. If two contributors couldn’t finish, present yourself. Or pull in a piece of pre-existing work and frame it explicitly: ”this is something I built before the network started. I’m using it today to model what a demo looks like.” The goal in cycle one isn’t volume. It’s establishing the rhythm. Once cycle two arrives, real demos start happening and you don’t have to push anymore.

Switch from parallel to themed when the signal appears. The first cycles are deliberately scattered. Let people pick what they’re motivated by. Once more than half the demos pull from the same problem area without anyone asking, the rhythm is real and you can switch to themed cycles: one pain point per cycle, all experiments target it. In practice this lands around sprint 4 to sprint 6. The signal is the trigger, not the calendar. This is the move that turns a fun network into something the rest of the company adopts.

Six moves. Twelve weeks. The network either has a rhythm by then or you’ve learned what was missing.

Start here

Innovation portfolios. Agile rhythm. Pair work. None of these principles are new. What’s new is the cost of trying. A sponsor, a backlog, four rituals, a calendar slot for pair-doing. That’s the kit.

Pick a sponsor. Pick four people. Pick a sprint length. Start.

In a few months, look at your teams again. Either the gap closed, or you’ll know exactly which condition stopped holding.