How to lead a tech team through the AI shift

Empower your champions to create social proof that pulls the team forward. Launch small lighthouse projects to demonstrate immediate value and reframe the developer role to elevate professional craft.

For the last decades, the formula for disrupting a market has been the same: Business people + developers = revolution.

When real estate agents were disrupted by Zillow, it was developers who built the code. When the hospitality industry was shaken by Airbnb, or taxi drivers were challenged by Uber, it was developers who wrote the algorithms. Every time an industry was turned upside down, the developers were the ones holding the shovel. They were the architects of change, safe in the control room while the rest of the world adapted to their code.

But now, for the first time to my knowledge, the disruption has come inside the house. The developers are disrupting themselves.

This is not just another framework update or a new cloud provider; this is a fundamental shift in who does the work. Just recently, Andrej Karpathy, one of the most respected thinkers in AI, shared a post on X that illustrates a paradigm shift:

He admitted that by December, his workflow had completely flipped: he is now 80% managing AI agents and only 20% touching the code manually. If one of the best engineers in the world is shifting from writing code to managing coding agents in the span of four weeks, imagine how the engineers on your team are processing this shift.

The result? A mix of adrenaline, confusion, and deep preoccupation.

Open Reddit or Hacker News, and you see two parallel realities. On one side, you have the hyper-enthusiasts claiming they built a startup in a weekend using Claude Code. On the other, you have legitimate anxiety and deep questioning. People aren’t just worried about their next performance review; they are wondering about their long-term relevance. They are asking:

Is my craft dead? Am I just a glorified spell-checker now? Is this even sustainable for the planet?

As a manager, you are standing in the middle of this storm. You might have top management demanding 90% AI adoption by Q4, while your team is looking at you with skepticism. You can’t just tell them, “Get on the bus or get left behind.” That’s lazy leadership. You also can’t recite the tired cliché that “AI won’t replace you, a human using AI will.” They know it’s a platitude.

I’ve spent the last months in the trenches, reading the forums, talking to developers at meetups, and watching the mood shift from “autocomplete is cool” to “how do I orchestrate agents?” But I didn’t just rely on my own observations. I spent hours dissecting these challenges with Gemini, asking it to act as a change management expert, to challenge my assumptions and help me structure a playbook for this specific transition. This article is the result of that collaboration: a distillation of the principles and tactics to help you lead when the ground is moving.

If you want to move your team from point A, uncertainty, to point B, empowerment, you need more than a license to access the latest model. You need a new way to talk about work. You need to address the legitimate concerns (e.g., the quality issues, the boredom, the loss of craft) without dismissing their reality.

Here is the plan we will cover:

Some principles. We start with the psychology of change. We will look at why selling the solution fails if you don’t address the head, hands, and heart, and why you need to stop wasting energy arguing with resistors.

Some tactics. We move to execution. I will share the concept of building lighthouse teams to prove value, explore why a training matrix might work better than a bootcamp, and discuss how to use Aikido to redirect energy in 1-on-1s.

Some scripts. We face the hard truths. We will break down three deep objections I see most often: the fear of becoming a compliance officer, the risk of understanding debt, and the ethical concerns about energy usage.

I share here the patterns I have observed in the trenches and the strategies that are currently working for me. Every engineering culture is unique, and the AI landscape changes so fast that what works today might be obsolete in six months. Please treat these ideas not as laws, but as experiments to run. Take what resonates with your specific culture, discard what doesn’t, and iterate on the rest.

Let’s get to work!

Some principles for navigating change

Before we get into the specific scripts and tactics, I want to share how I mentally approach change. When I’m looking at a transformation, there are three specific visuals that always pop into my head. They aren’t the only models out there, obviously, but these are the ones that really stuck with me and helped me make sense of the human side of things.

Here are those three core principles defined as deep-dive action plans for managers.

#1. The head, hands, and heart: Ignite motivation

The context: When we talk about change, we often overcomplicate it. At its core, moving a human being from point A to point B requires three things to happen simultaneously. First, the head: they need the intellectual understanding of the strategy (the map). Second, the hands: they need the technical capability and the tools to execute (the driving skills). And finally, the heart: they need the emotional motivation to actually want to move (the fuel). If all three are present, you get movement. If one is missing, you stall.

The challenge: In most AI transformations, companies are excellent at addressing the head and the hands. You organize team meetings to explain the strategic vision (head), and you buy thousands of licenses for Claude Code (hands). But you completely neglect the heart. You give the team a map and a brand-new car, but you forget to put fuel in the tank. If your developers feel that AI is a threat to their craft, their identity, or their job security, their tank is empty. No amount of training will make the car move if there is no fuel.

The common trap: The most common mistake managers make is trying to fix a heart problem with a head solution. When a developer says, “I don’t trust this code, it’s garbage,” they are often expressing fear, not a technical opinion. If you respond by showing them a chart of productivity gains or explaining the roadmap again, you are missing the point. You are answering an emotional objection with a logical argument. This doesn’t reassure them; it makes them feel unheard and pushed into a corner. You cannot fix a feeling with a spreadsheet.

A different perspective: Stop selling the solution and start diagnosing the blocker. You need to identify exactly which of the three buckets, head, heart, or hands, is empty. Resistance is rarely a monolith; it’s specific. Is it that they don’t understand the goal? Is it that they feel unskilled? Or is it that they are afraid? You need to play the role of a mechanic diagnosing an engine before you try to drive the car.

The diagnosis: Conduct a listening tour. Don’t do this with other managers; do it with the people actually typing the code. Pick five developers and ask them these three specific questions to map their resistance.

Test the head: “If you had to explain to a new hire why leadership wants us to use AI, what would you say?” Listen carefully. If they say “To ship features faster” you are good. If they say “Because the boss read a tweet and got FOMO,” you have a head problem.

Test the hands: “What is the specific friction point that makes you want to close the tool right now?” Maybe the model keeps hallucinating deprecated libraries or losing context. This is a hands problem.

Test the heart: “When you open the AI tool, does it feel like a superpower, or does it feel like a chore?” This is the critical question. If they say “It feels like a chore” or “It makes me lazy,” you are dealing with a heart problem.

The fix:

If the head is empty: Stop training. Start contextualizing. Show them how AI solves their specific business problems, not just the company’s stock price.

If the hands are empty: Stop motivating. Start unblocking. The friction is rarely the laptop speed; it’s the integration complexity. Provide pre-built context scaffolding (like

.cursorrulesfiles) or run a context engineering workshop to solve the specific hallucinations blocking them.If the heart is empty: Stop explaining. Start reassuring. Explicitly frame AI as a tool to remove drudgery so they can focus on architecture. Give them a guarantee that using AI won’t negatively impact their performance review if things break initially.

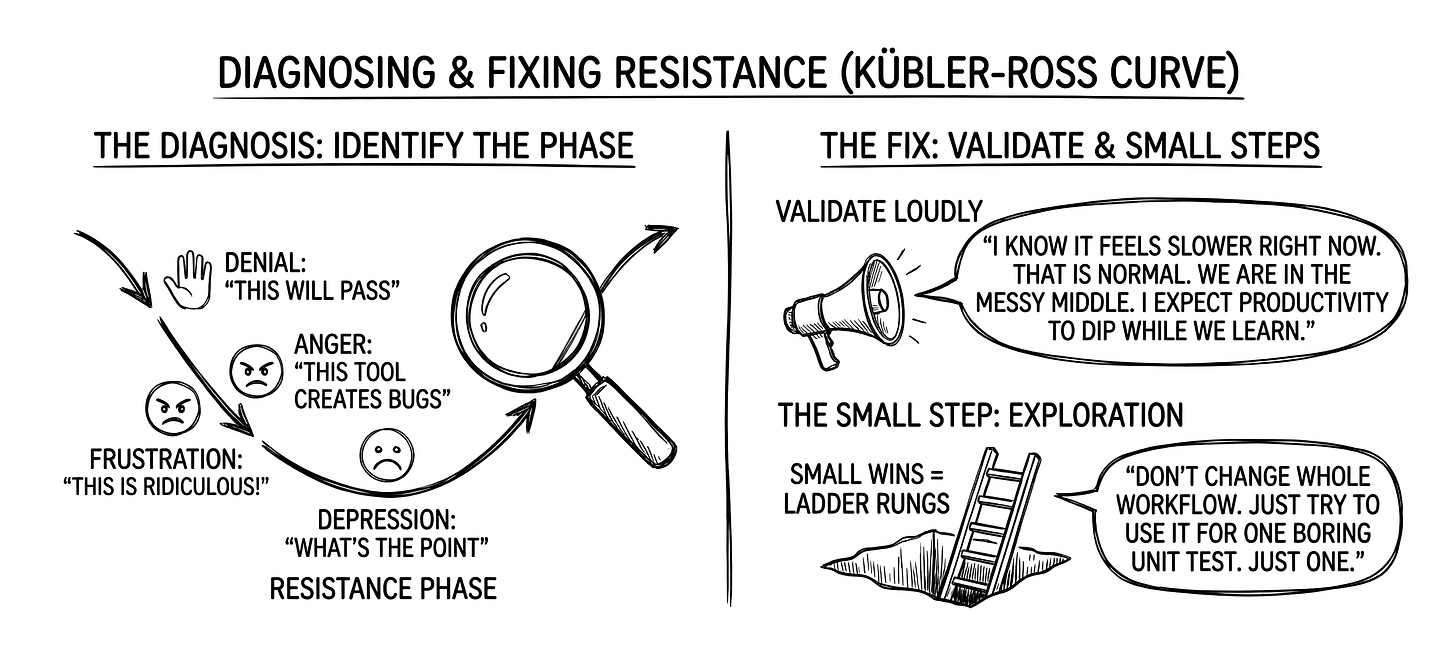

#2. The change curve: Navigate emotional stages

The context: We tend to sell AI adoption like a rocket ship: you install the tool, and productivity shoots up and to the right immediately. But human reaction to deep change doesn’t work like that. It follows an U shape known as the Kübler-Ross change curve. It starts with shock, moves to denial, crashes into frustration and depression, and only then slowly climbs up to experimentation and acceptance.

The challenge: The danger zone is the bottom of the U, often called the valley of despair. After the initial excitement wears off, reality hits. Your team realizes the AI writes buggy code, uses outdated libraries, and requires heavy debugging. They spend more time fixing the AI’s mess than if they had written the code manually. Productivity drops below the baseline. This is where morale tanks, and people think: “This is useless. My workflow is ruined. I want to go back to the old way.”

The common trap: The worst thing you can do when your team is in the valley of despair is to stand at the finish line and cheerlead. If you stand in the integration phase and yell down to your depressed team, “Come on guys, look at the ROI! It’s great up here!” You look out of touch and delusional. It feels like toxic positivity. They don’t need to hear how great the future is; they need you to acknowledge how hard the present is.

A different perspective: You need to throw them a rope. Validation is your most powerful tool here. You must admit that the tool is imperfect and that the dip in productivity is the price of admission. By acknowledging the pain, you remove the pressure to pretend everything is perfect. You normalize the struggle, which paradoxically helps them move through it faster. The only way out of the valley is through it.

The diagnosis: Identify the phase. Look at your team honestly. Are they in denial (”This will pass”)? Anger (”This tool creates bugs”)? Or depression (”What’s the point”)?

The fix:

Validate loudly: Say clearly in your next meeting: “I know it feels slower right now. That is normal. We are in the messy middle, and I expect productivity to dip while we learn.”

The small step: Don’t ask for transformation. Ask for exploration. Tell them: “I don’t need you to change your whole workflow today. Just try to use it for one boring unit test. Just one.” Small wins are the ladder rungs out of the pit.

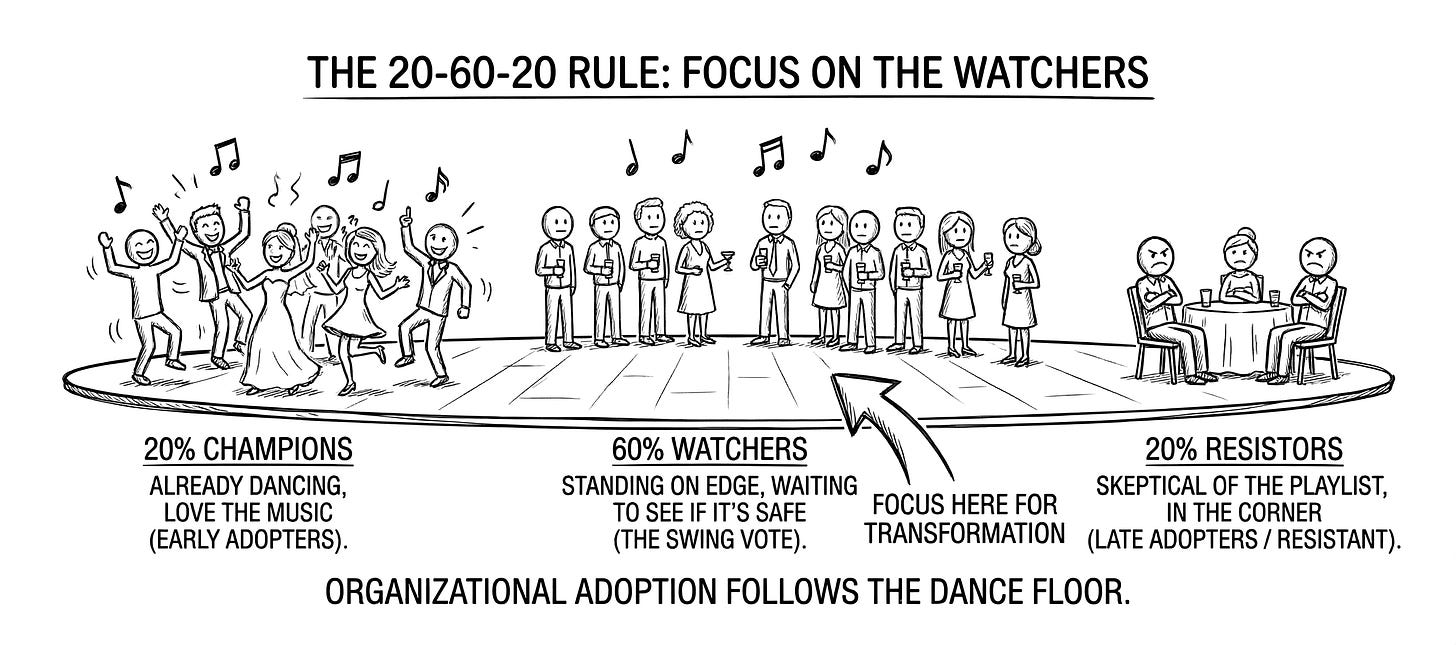

#3. The 20-60-20 rule: Focus on the watchers

The context: You cannot transform everyone at the same speed, and if you try, you will burn out. In every organization, adoption follows the 20-60-20 rule. Imagine a wedding dance floor. You have the top 20% champions who are already dancing and love the music. You have the bottom 20% resistors who are sitting in the corner with their arms crossed, skeptical of the playlist. And you have the middle 60% watchers standing on the edge, holding their drinks, waiting to see if it’s safe to join in.

The challenge: Most leaders spend most of their energy arguing with the resistors. They try to convert the loudest critics using logic, debate, and pressure. This is a massive trap. You risk confusing resistance with wisdom. While you are busy exhausting yourself in that argument, you miss the technical risks they are spotting, and the critical middle 60% gets bored, sees the conflict, and decides to sit down.

The common trap: Silencing the skeptics. In engineering, resistors are often the guardians of institutional memory. If you treat every objection as negativity, you create a culture of toxic positivity where legitimate technical risks, like security flaws or non-deterministic behaviors, are suppressed.

A different perspective: Filter the noise. Focus entirely on empowering the champions to attract the watchers, while simultaneously redirecting the resistors’ energy into quality control. The watchers don’t look to you (the manager) for social cues; they look to their peers. If they see the champions having fun and the resistors ensuring the system is robust, the watchers will naturally feel safe to join.

The diagnosis: Map the room. Before your next team sync, look at the roster and mentally tag everyone: dancer, watcher, or wallflower.

The fix:

Amplify the dancers: Give your champions beta access to the newest models, assign them the most interesting greenfield projects, and praise them publicly. Make being a champion look fun.

Pull the middle: Explicitly highlight the benefits the champions are getting. “Hey everyone, look how Team X finished their testing phase in half the time because they used the AI agents.” Let the results do the selling.

Red-team the resistors: Distinguish between emotional resistance (fear) and principled objection (quality). Don’t argue too much with the principled objectors; assign them the role of red team. Tell them: “Your job isn’t to stop the AI, but to break it. Find the flaws.” Use their skepticism as a stress-test mechanism to ensure stability.

Now that we have a shared language, it’s time to start doing. Let's move on to the specific, battle-tested maneuvers you can use to start moving the needle.

Some practical tactics for driving adoption

You don’t need a 50-page strategy document to transform a team. You need a few targeted interventions that create momentum without burning you out. In this section, I will cover three specific levers: organizational structure (how you run pilots), skill acquisition (how you teach), and communication (how you handle the 1-on-1s).

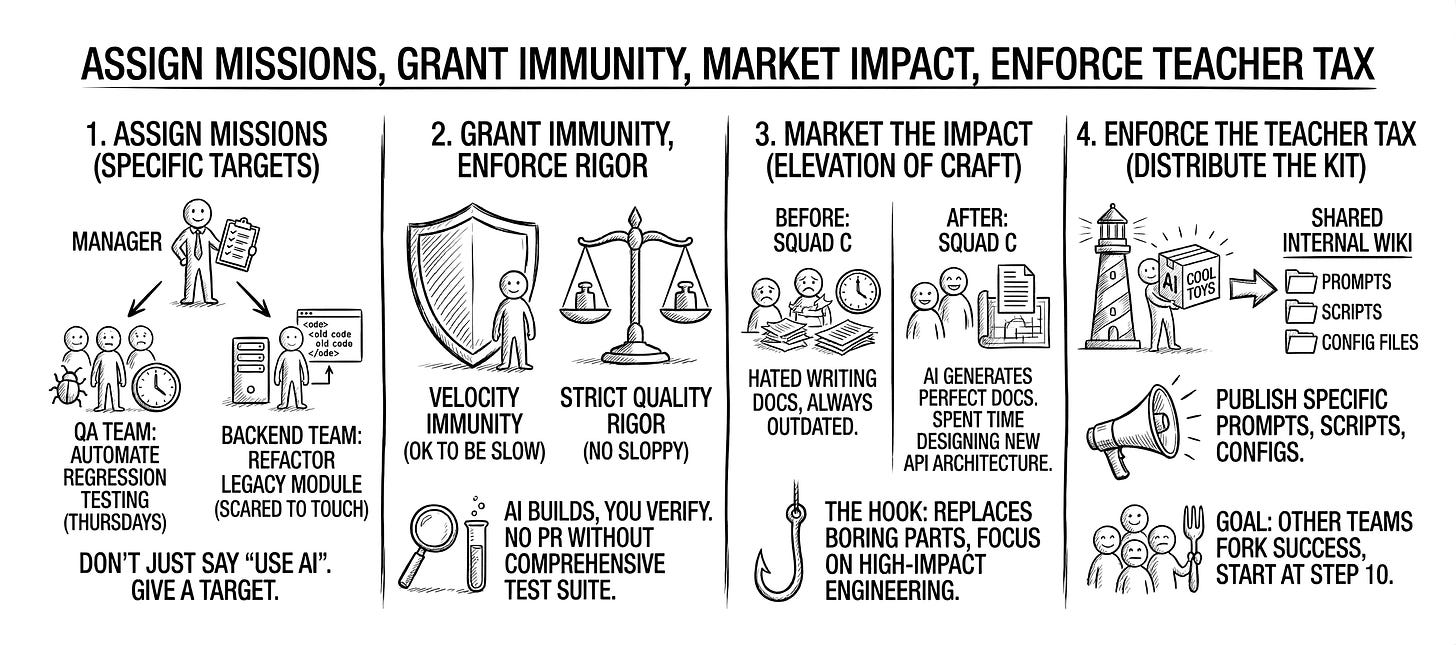

#1. The lighthouse strategy: Build visible success

The context: When you start a transformation, the temptation is to go big immediately. You look at your developers and think: “We need everyone using AI by next month.” You plan a massive rollout, generic training for every department, and expect immediate organizational impact.

The challenge: If you try to change everything at once, you will trigger the organizational immune system. You will be fighting a war on ten fronts. The watchers will get frustrated by setup issues, the resistors will band together to complain, and you will spend all your time putting out fires. Worse, if you give everyone a generic tool without a specific purpose, they will use it for trivial things and conclude it’s a toy.

The common trap: The big bang rollout. This is sending an email saying: “Starting Monday, everyone must use Claude Code.” This creates chaos. You are forcing the valley of despair on the entire company at the exact same moment.

A different perspective: Build a fleet of lighthouses. Instead of pushing the whole company, pick 3 or 4 small, agile squads and give them specific missions.

Squad A, the builders: “Your mission is to see if AI can speed up our boilerplate coding.”

Squad B, the guardians: “Your mission is to see if AI can write 80% of our unit tests.”

Squad C, the scribes: “Your mission is to automate our technical documentation.”

Your goal is not to get +100 people using AI poorly; it’s to get 3 teams using AI perfectly to solve specific, painful problems. Crucially, the job of these teams is not just to succeed, but to package their success so others can copy it.

Experiments to try:

Assign specific missions. Don’t just say “Use AI.” Give them a target.

To the QA team: “Can you use AI agents to automate the regression testing that eats up your Thursdays?”

To the backend team: “Can you use AI to refactor this legacy module we are all scared to touch?”

…

Grant velocity immunity, but enforce quality rigor. Give these teams permission to be slow, but forbid them from being sloppy. Tell them: “For the next 4 weeks, ignore your velocity metrics. It’s okay to be slow while learning. However, because AI generates code so fast, you must be stricter than ever on quality. You can use AI to build it, but you must use rigorous testing to verify it. No PR is merged without a comprehensive test suite.”

Market the impact. When the experiment is done, don’t talk about lifestyle improvement or time saved. Talk about the elevation of craft.

The message: “Look at the squad C. They used to hate writing documentation, so our docs were always outdated. Now, they use AI to generate structured, perfect documentation automatically. They stopped doing the boring work and spent that time designing the new API architecture.”

The hook: You are showing the other teams that AI doesn’t replace them; it replaces the boring parts of their job so they can focus on the high-impact engineering they actually enjoy.

Enforce the teacher tax. This is the deal: The lighthouse teams get the cool toys and the immunity, but in exchange, they must distribute the kit. They don’t just say “it works.” They must publish the specific prompts, the scripts, and the configuration files they used to the shared internal wiki. The goal: Any other team should be able to fork their success and start at step 10, not step 1.

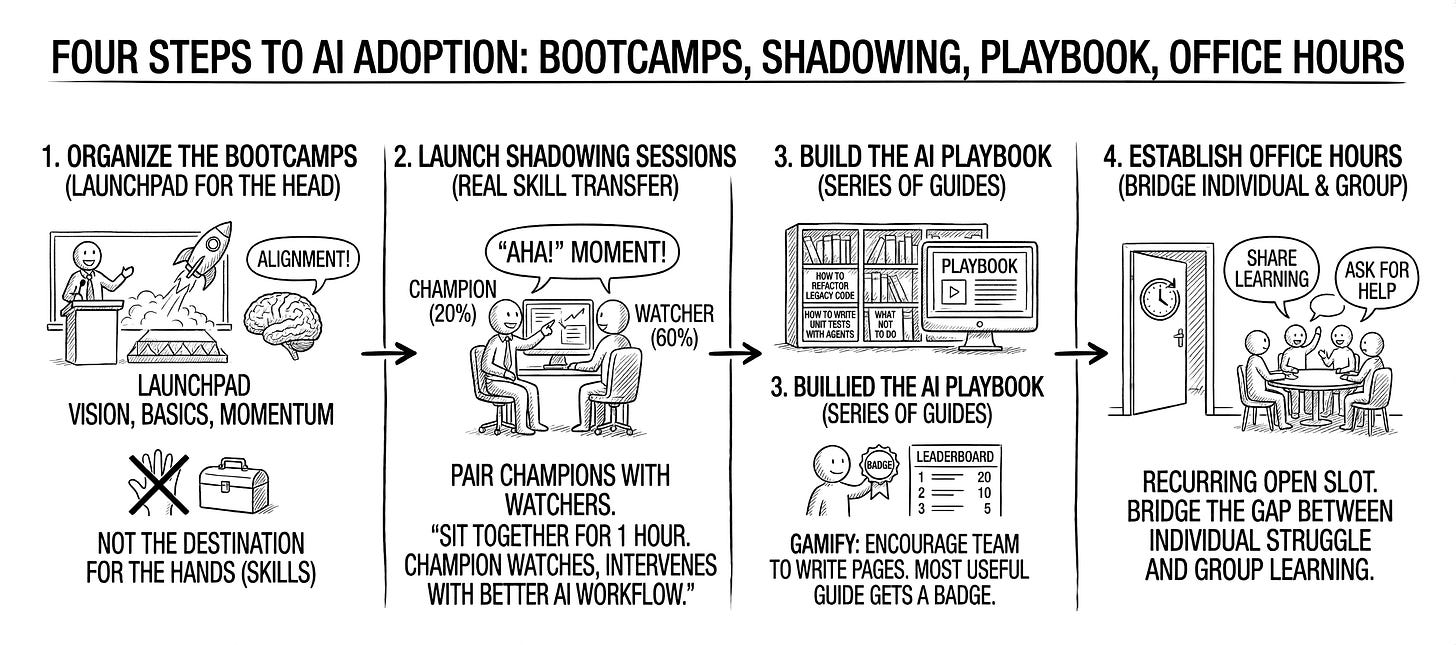

#2. The training matrix: Go beyond the bootcamp

The context: When companies decide to up-skill their workforce, they usually default to a single mode: the corporate bootcamp. You hire an agency, book a room, and teach +100 people at once. There is nothing wrong with this; it creates energy, establishes a shared vocabulary, and gets everyone’s attention. It’s a necessary kick-off.

The challenge: The problem isn’t the bootcamp itself; the problem is stopping there. People don’t learn a complex new behavior just by listening to a lecture. They learn by trying, failing, and getting unstuck. If you rely 100% on the bootcamp, you hit the forgetting curve: people leave the room inspired, but the moment they face a specific blocker at their desk, they revert to their old habits. You are only pulling one lever when you need to pull four.

The common trap: The one-size-fits-all approach. This is thinking that once you have ticked the box marked “training session,” your job is done. It assumes that a senior architect learns the same way as a junior dev, and that listening to a slide deck is the same as writing code.

A different perspective: You need to deploy a training matrix. To get true adoption, you must cover all four quadrants of learning.

The launchpad (group + synchronous): The bootcamps. Use them to set the vision, explain the basics, and create group momentum.

The library (group + asynchronous): This is your AI playbook. It’s the self-service documentation and tutorials where anyone can go to find answers when they are stuck.

The shadowing (individual + synchronous): This is the most important quadrant. This is 1-on-1 coaching. It’s when an AI champion sits next to a watcher and helps them debug a specific problem. That moment of clicking: when the watcher realizes, “Oh, that’s how you do it,” only happens here.

The lab (individual + asynchronous): This is learning by doing. Giving people specific solo projects or homework to explore the tools at their own pace.

Experiments to try:

Organize the bootcamps. Use the workshops to set the vision, explain the basics, and create group momentum. They are your launchpad for the head (alignment), not the destination for the hands (skills).

Launch shadowing sessions. This is where the real skill transfer happens.

The action: Pair your champions (top 20%) with your watchers (middle 60%).

The task: “Sit together for 1 hour. The champion watches the watcher work and intervenes only to show a better AI workflow.”

The goal: Creating those “Aha!” moments that never happen in a classroom.

Build the AI playbook. Don’t just list prompts. Build a series of guides.

The content: “How to refactor legacy code,” “How to write unit tests with agents,” “What not to do.”

The method: Gamify it. Encourage the team to write these pages. The person who writes the most useful guide gets a badge.

Establish office hours. Create a recurring open slot where anyone can drop in to share what they learned or ask for help. This bridges the gap between individual struggle and group learning.

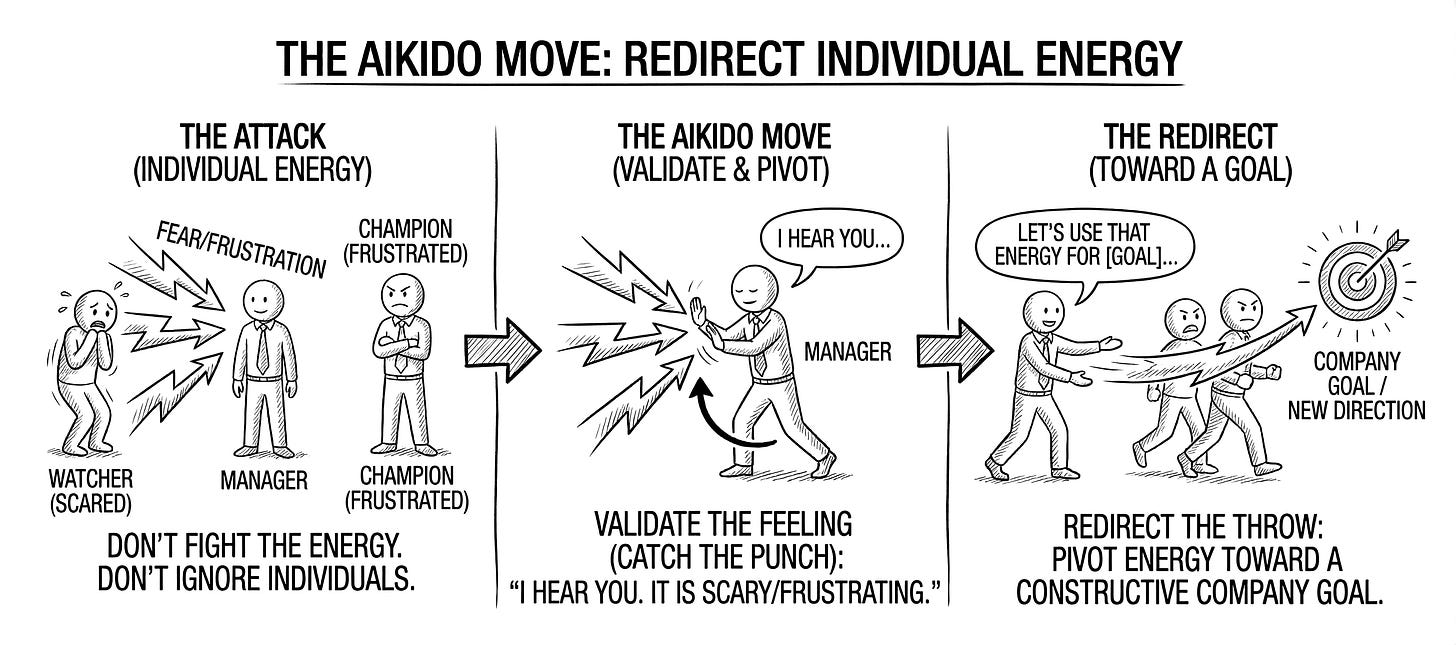

#3. The Aikido move: Redirect individual energy

The context: Earlier, I told you to ignore the resistors in group settings. That rule stands: never give a platform to negativity in a town hall. However, you cannot manage a team by ignoring individuals. Eventually, you will sit down for a coffee with a scared watcher, or a frustrated champion who wants to bypass security protocols. You need a universal way to handle these conversations without getting into an exhausting debate.

The challenge: Most managers handle objections with logic or policy.

When a watcher says, “AI writes bad code,” the manager argues: “No, look at the benchmarks, it’s faster.”

When a champion says, “Security is blocking me, I need to use this unauthorized tool,” the manager quotes the rulebook: “Compliance is mandatory.”

In both cases, you are building a wall. The watcher feels unheard and digs in. The champion feels stifled and disengages (or goes rogue). You are fighting against their energy.

The common trap: The head-on collision. This is when you try to stop someone’s emotion with a fact. If a developer feels a loss of craft, showing them a productivity chart doesn’t help; it insults them. If a champion feels a need for speed, telling them to wait for legal kills their drive.

A different perspective: Use Aikido.

Aikido is a Japanese martial art where you do not block an attack. Instead, you step aside, grab the attacker’s wrist, and use their own momentum to throw them in a new direction. In management, this means: Don’t fight the energy; redirect it. You must validate their specific feeling (catch the punch) and then pivot that energy toward a goal that helps the company (redirect the throw).

Experiments to try:

Validate the emotion, the catch. No matter who you are talking to, agree with their emotion. Do not say “But...” Say “You’re right.”

To the watcher (fear): “You’re right. The AI code is often mediocre and risky compared to what you write.”

To the champion (frustration): “I agree. The security process is too slow and it is killing your momentum.”

Why: This lowers their defenses. You are no longer the enemy; you are an ally.

Redirect the momentum, the pivot. Use their specific energy to assign a new, valuable role that benefits the team.

For the watcher: They have fear or caution. Tell them: “Because you are so skeptical of the quality, I need you to be the final Human-in-the-Loop. Don’t write the code; audit the AI. You are now our quality guardian.”

For the champion: They have excess energy. Tell them: “Because you move so fast, I need you to lead the experimental sandbox. You break the rules there, and then you teach us what is safe. You are now our R&D lead.”

Formalize the new mandate. Don’t leave it as a casual chat. Send a follow-up email confirming their new special assignment.

The action: “Mark, as discussed, I’m counting on you to be the quality guardian for the AI output. Please reject any PR that doesn’t meet your standards.”

The result: You have turned their objection into a job description.

With these three tactics, you have a complete operating system for driving change. You are no longer pushing a boulder uphill; you are creating an environment where adoption becomes the path of least resistance. However, even with the best strategy, you will still encounter specific, loud roadblocks. In the next section, we will look at the common objections you will face and exactly how to handle them.

Some scripts for overcoming resistance

Tactics are useful for how to implement AI, but they don’t answer the why. In my conversations at meetups, forums, and coffee breaks, I’ve noticed a shift. It’s no longer just about “how do I prompt?”. The questions are getting deeper. From what I can see right now, the resistance tends to cluster around three main anxieties. This isn’t an exhaustive list (and the landscape changes every week) but these are the three main battles I see managers facing most often today.

These aren’t haters; they are often your most conscientious employees acting as the company’s immune system. If you ignore them, you lose your soul. If you fight them, you lose your talent. Here is how to navigate them.

#1. The crisis of meaning: Redefine the craft

The context: You are in a 1-on-1 with a senior developer. They look demoralized. They tell you: “If my job is just to verify code generated by a machine, I feel like a factory operator, not an engineer. I don’t want to be a human-in-the-loop compliance officer. If you strip the craftsmanship out of my role, why should I be loyal to the mission? I might as well be a mercenary doing the bare minimum.”

The challenge: This is the fear of mediocrity and boredom. Your top talent thrives on problem-solving. They fear that AI will automate the fun part (the creative coding) and leave them with the boring part (reading and debugging someone else’s sloppy code). They worry about mediocrity by design that by relying on a probabilistic model, we are destined to build average, uninspired products.

The common trap: Dismissing their pride. Telling them: “You shouldn’t care who writes the code as long as it ships.” Or worse, telling them: “This frees you up to do higher level thinking,” without defining what that actually means. To a builder, not building feels like dying.

A different perspective: You must redefine craftsmanship. Acknowledge that AI is, by definition, the average of the internet. It cannot invent the “pink elephant with wings” unless it has seen it. Don’t try to rebrand them as curators. Reviewing AI code is painful work, and they know it. Instead, reframe the altitude of their work. Tell them: “You are right. Reviewing code is boring. But I don’t need you to be a compliance officer. I need you to be the staff engineer. The AI is the junior developer. You need to stop writing loops and start designing systems.”

Experiments to try:

Map the difference between drudgery and art. Sit down and map their tasks. Agree that debugging AI code is boring. Ask: “Let’s use the AI only for the parts of the job you hate (boilerplate, unit tests). I want you to ban AI from the crown jewels, the complex core architecture where your human expertise is irreplaceable.”

Elevate their role to system architect. Formalize their shift from code writer to system designer. Stop asking them to curate output. Ask them to orchestrate solutions. Tell them: “The AI can lay the bricks, but it cannot design the cathedral. I need you to focus 100% of your energy on system design, security, and scalability. You are now the architect; the AI is just the contractor.”

Designate specific no-AI zones. Designate specific complex projects where AI is forbidden to keep their mental muscles sharp. “For this critical security module, I want zero AI. We need pure human understanding here.”

#2. The debt trap: Enforce explainability

The context: A lead architect challenges you in a meeting: “We are generating features at 10x speed, but we are also accruing 10x understanding debt. In three years, when this complex AI-generated system fails, will we have anyone left on staff who actually understands the architecture well enough to fix it? Or are we becoming a vibe coding company where we just guess until it works?”

The challenge: This is the fear of fragility. It’s a valid fear. If the goal of AI is to remove human input from decision-making, code robustness usually suffers. We risk building a black box that nobody understands, leading to a catastrophic failure that no human can debug because they have lost the meta-knowledge of how the system was built.

The common trap: Blind optimism. Saying: “The AI will be better at debugging by then,” or “We have tests for that.” This confirms their suspicion that leadership is reckless and focused only on short-term KPIs like lines of code generated.

A different perspective: Treat the AI as an unreliable narrator. Agree that understanding debt is the biggest risk to the company. Position the senior architect not as a blocker, but as the guardian against this debt.

Experiments to try:

Enforce explainability reviews. Change your code review policy. “If you use AI to generate a function, you must be able to explain exactly how it works line-by-line during the review. If you can’t explain it, the PR is rejected. We don’t ship code we do not understand.”

Institute the whiteboard rule. Build knowledge maintenance into the daily workflow. During a PR review, the reviewer picks one complex, AI-generated function at random and asks the author to explain the logic on a whiteboard (or shared screen) without looking at the comments. If they fail, the code is rewritten manually.

Define strict limits for vibe coding. Set strict boundaries. “Vibe coding is okay for prototypes. It’s strictly banned for production core. For production, we need engineering, not vibes.”

#3. The ethical objector: Prioritize utility

The context: An employee raises a hand at a team meeting: “How do we plan to take responsibility for the environmental impact? We are consuming water and energy at the scale of a small nation just to generate mediocre emails. Are we becoming an unsustainable company? Is this just a bubble like the Expert Systems of the 70s, and we are destroying the planet for a fad?”

The challenge: This is the fear of moral alignment. Employees want to work for a company that does good. If they see AI being used wastefully (e.g., generating 4 images of a wine glass just to pick one), they see leadership as irresponsible. They worry about the out by default principle: that perhaps we shouldn’t use AI at all unless necessary.

The common trap: Greenwashing. Saying: “We offset our carbon,” or “We have to do it because competitors are.” This sounds hollow to someone who is genuinely worried about the depletion of resources.

A different perspective: Adopt a strategy of intelligent parsimony or frugal AI. Agree that AI should be out by default. It should only be used when the value exceeds the cost.

Experiments to try:

Conduct a utility vs. cost audit. Don’t use AI for everything. “We are not going to use LLMs to classify simple text if a regex script can do it. We will use the smallest model possible for the job. We don’t need a cannon to kill a fly.”

Reward real work over POCs. Address the fear that only AI toys are rewarded. Publicly recognize a team that delivered a critical non-AI feature or optimized a system to use less compute.

Assign the value density mandate. Redirect their eco-anxiety into governance. “I share your concern. That’s why I want you to audit our usage. We will strictly limit inference to high-leverage engineering tasks. We will not use GPUs to summarize Slack threads or write emails. If the output doesn’t justify the energy cost, we kill the feature. Your job is to ensure we aren’t using a chainsaw to cut butter.”

If you want to dig deeper into the actual ecological impact of LLMs, there is this article from Andy Masley. It really helped ground my understanding. It has become my personal reference whenever I need logical explanations on the topic.

You cannot win these battles by arguing. You win them by agreeing. The bored genius is right: AI is boring if you use it wrong. The architect is right: understanding debt is real. The eco-warrior is right: the energy cost is high. By validating these fears and putting strict human guardrails in place, you turn your biggest critics into the guardians of your quality. You don’t ask them to blindly trust the machine; you ask them to manage it.

One final tip: Build your own compass

Before we wrap up, I want to share where a lot of these insights came from.

I didn’t just rely on my own observations from the trenches. To structure the concepts, I spent some hours debating with Gemini. I treated the AI as a sparring partner, asking it to role-play as a change management expert to challenge my assumptions and refine these tactics.

I remember when I was a consultant at the beginning of my career, I had to read stack of books on change management. Those books gave me great reflexes and models that I still use today. But books have one flaw: they are static. They give you the average advice for the average company. But your company is not average. Your culture is specific. Your blockers are unique.

Today, the best way to learn change management isn’t just to read a book; it’s to ask questions. Change management is, at its core, very simple: it’s just helping people move from point A to point B. But because every person is different, the help they need varies wildly. Some need reassurance, some need data, some need a challenge. You will have to repeat yourself, and you will have to adapt your pitch ten different ways.

So, rather than just giving you the fish, I want to give you the fishing rod. If you are stuck with a specific team or a specific battle we didn’t cover here, go ask the AI. But don’t ask it to write an email. Ask it to think with you.

Here is the exact prompt I used to start thinking about the broader challenges of AI transformation and how it impacts people. Feel free to adapt it and use it to debug your own specific challenges:

Imagine you are a change management expert and we have a simple conversation at the coffee machine. You have managed a lot of AI transformations in other companies so you know the main obstacles. You are my friend and you try to help me. I will ask you some questions and you will answer them with simple words, no jargon, and concrete analogies. Let’s start.

Treat the AI as your coach. It won’t do the change for you, but it will help you find the words to make the change happen.

If you take only one thing away from this, let it be this: AI adoption is 10% technology and 90% sociology.

We spend hours debating which model or tool is better but we spend almost no time debating how to make the teams feel safe enough to use them. As we have seen, the barrier isn’t that the tool is too hard to learn; the barrier is that the change feels too dangerous to accept.

Your job as a manager has changed. You’re no longer a lead ensuring the code ships. You are now the bridge:

You are the bridge between the head (strategy) and the heart (fear).

You are the bridge between the champions who want to run fast and the watchers who are afraid to fall.

You are the bridge between the efficiency of the machine and the craftsmanship of the human.

This transition will not be a straight line. You will hit the valley of despair. You will have days where the resistors make you want to quit, and days where the champions accidentally break production. That is not a sign of failure; that’s the messy, necessary work of evolution.

The goal is not to build a team that works for the AI, blindly following prompts. The goal is to build a team where the AI handles the drudgery (the boilerplate, the tests, the docs) so that your humans can finally do the job they were actually hired to do: think, create, and architect.

The tools will change next week. The principles of human psychology will not. Focus on the humans, and the tech will take care of itself.

Loved this comprehensive framework for navigating AI adoption! The vallye of despair concept really clicked for me because I've been there myself. When teams hit that productivity dip, acknowledging it explicitly removes so much preassure. The Aikido move with redirecting energy is clever too bc it turns objections into assignments rather than fighting them head-on.